Data without context is just high fidelity trivia

It’s all relative anyways

I’m willing to bet if you walked outside you couldn’t tell me the temperature to within 5 degrees. Our bodies are built to detect change, not state; we don’t know the temperature of the room, only that it’s warmer than the hallway. You can feel the elevator accelerate, but once it’s moving you have no idea how fast you’re actually going. We’re not adapted to step out and say ‘Oh, it’s 36.7 degrees, I should go back and grab a coat’. Instead you open the door, sense a rapid heat transfer from your skin and lungs, and your body signals a critical thermal gradient. Panic. This fact may be intuitive, but it conflicts with the instinct of the engineer who seeks to objectively quantify everything.

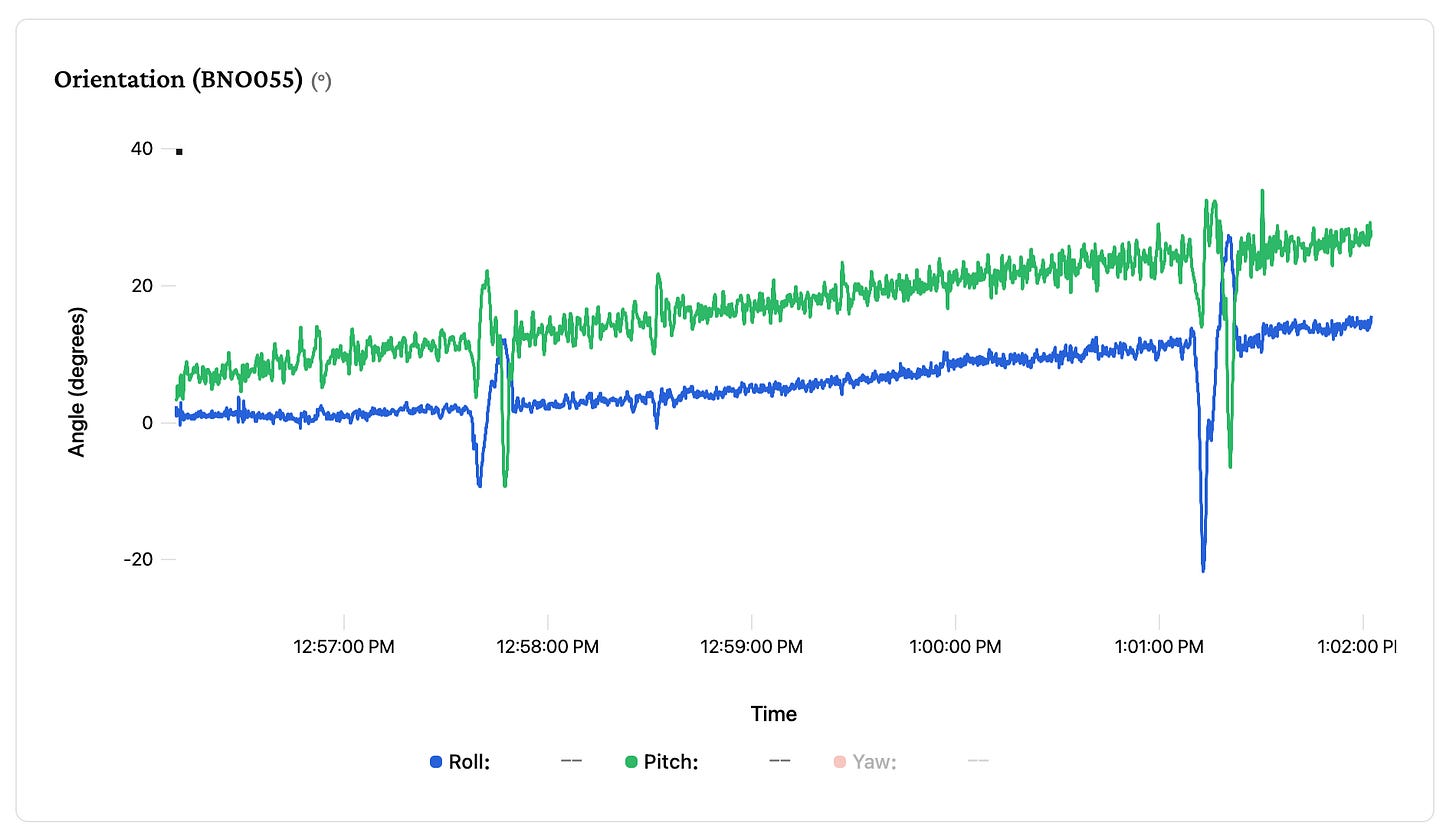

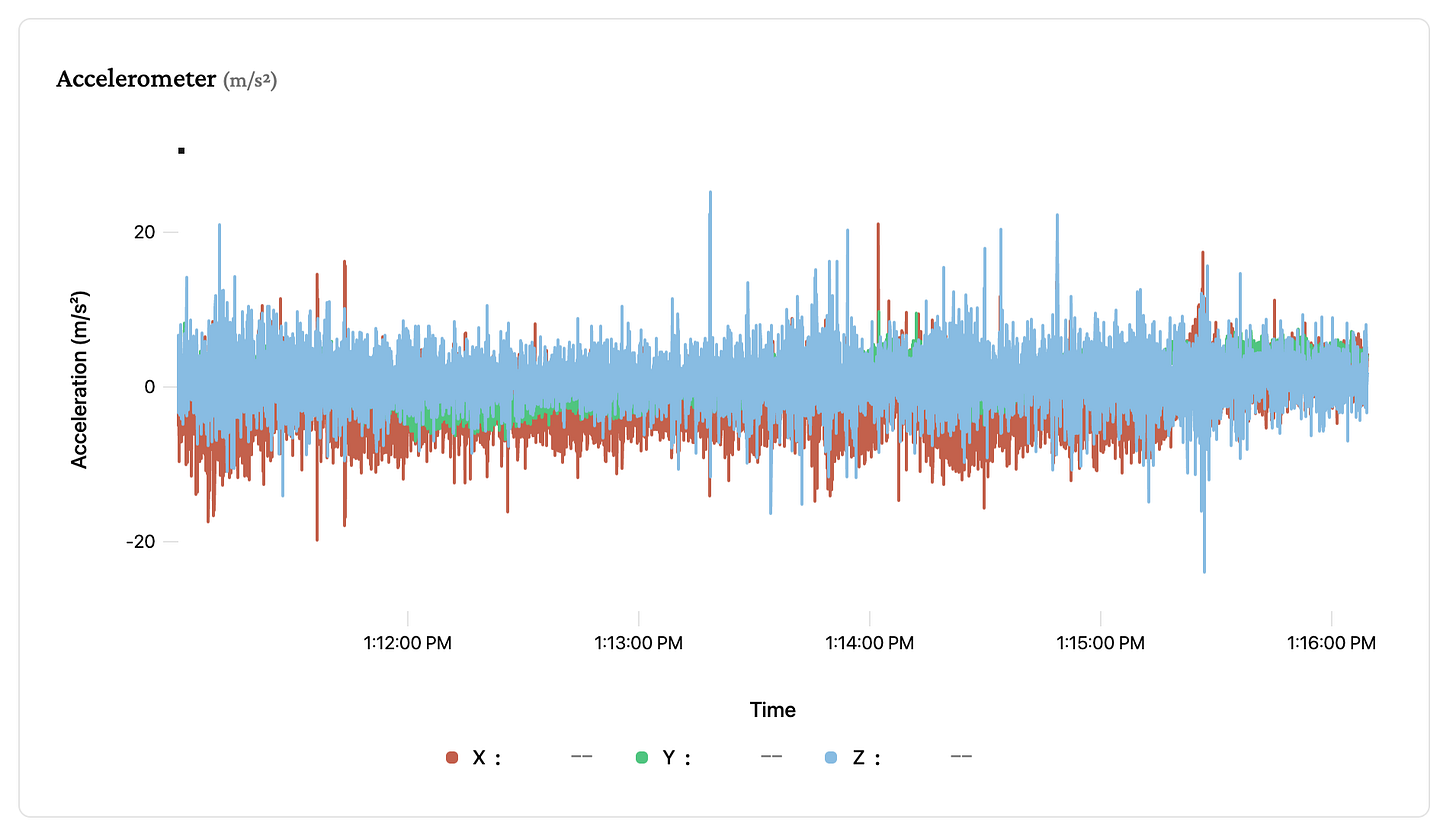

This reality is currently ruining my bike physics modeling project. I have reached the ‘valley of death’ for hardware projects: I can measure everything, but I understand nothing. When I first strapped an IMU (inertial measurement unit) to my bike, the telemetry looked like garbage. The raw acceleration and gyroscope charts looked like fuzzy caterpillars with wild, rapid oscillations on all axes drowning out any visible signal.

The consumer grade chip I’m using has built in sensor fusion, giving me a calculated “orientation” output that should represent the roll/pitch/yaw of the bike at any given point in time. It looks right initially, it can track turns and leans, however after 10 minutes of flat riding it was telling me the bike was climbing an SF steep street. This chip is designed for smartphones or VR headsets, and I’m asking a lot of it by trying to discern high frequency road noise from steering corrections.

Fix it in post

The engineering instinct is to chase objective ground truth. The data was incredibly noisy with a weak signal buried deep, the obvious solution was to reduce the noise floor with vibration damping to tease out the signal better. This is directionally correct, but it became a rabbit hole. I tried Sorbothane rubber damping. I tried software low-pass filters. I even designed and ordered some custom machined brass counterweights to mass-load the sensor, hoping to mechanically filter the vibrations. While the damping smoothed out the fuzzy ‘caterpillars’ (high-frequency noise), it was powerless against the silent, accumulating drift (bias instability) that gradually convinced the sensor that ‘down’ was ‘forward’. I spent weeks engineering workarounds to save a $30 chip from itself. It helped, but there was no magic bullet.

The term “sensor fusion” can mean any number of trivial or impossible problem sets. It could mean a simple overlay of your speed on the elevation graph (like strava does), or it can mean graduate level data processing pipelines that dynamically compute ‘trust’ of each input based on world modeling and recursive looping. I realized I wasn’t going to beat the hardware engineers at Bosch who have been tuning this chip for decades without turning this side quest into a PhD thesis.

The challenge now is how to evaluate the data. I see a sustained drop in the “roll” axis right before a corner (that’s a lean event!), but there’s a corresponding increase in the pitch axis data because I was going downhill when I did it. So how much did I actually lean the bike? Do I sum or subtract them, what about lateral g forces? This is all ‘correct and objective’ truth, but it’s a fundamentally flawed approach. Orientation lies because I’m asking a cheap piece of silicon to find “down” while I’m subjecting it to cornering forces that pull “down” sideways.

I’m asking someone to walk in a straight line on the deck of a ship in a storm. Eventually, they’re going to stumble, and what does ‘straight’ mean anyways?

Data without context is just high fidelity trivia

I have a highly optimized, fully indexed database containing half a million samples of noise. So what? Physical damping raised the signal floor, but a carbon road bike at 30mph is essentially a tuning fork. I can stare at my ‘roll’ chart and see each lean, the data is there. However, if I create a basic turn detector algorithm (rate of change of roll > x), it will give me countless false positives when I hesitate or correct. Also, if I want to know how much I leaned then I have to compare the peak against a baseline, but my baseline is drifting because the sensor is confused.

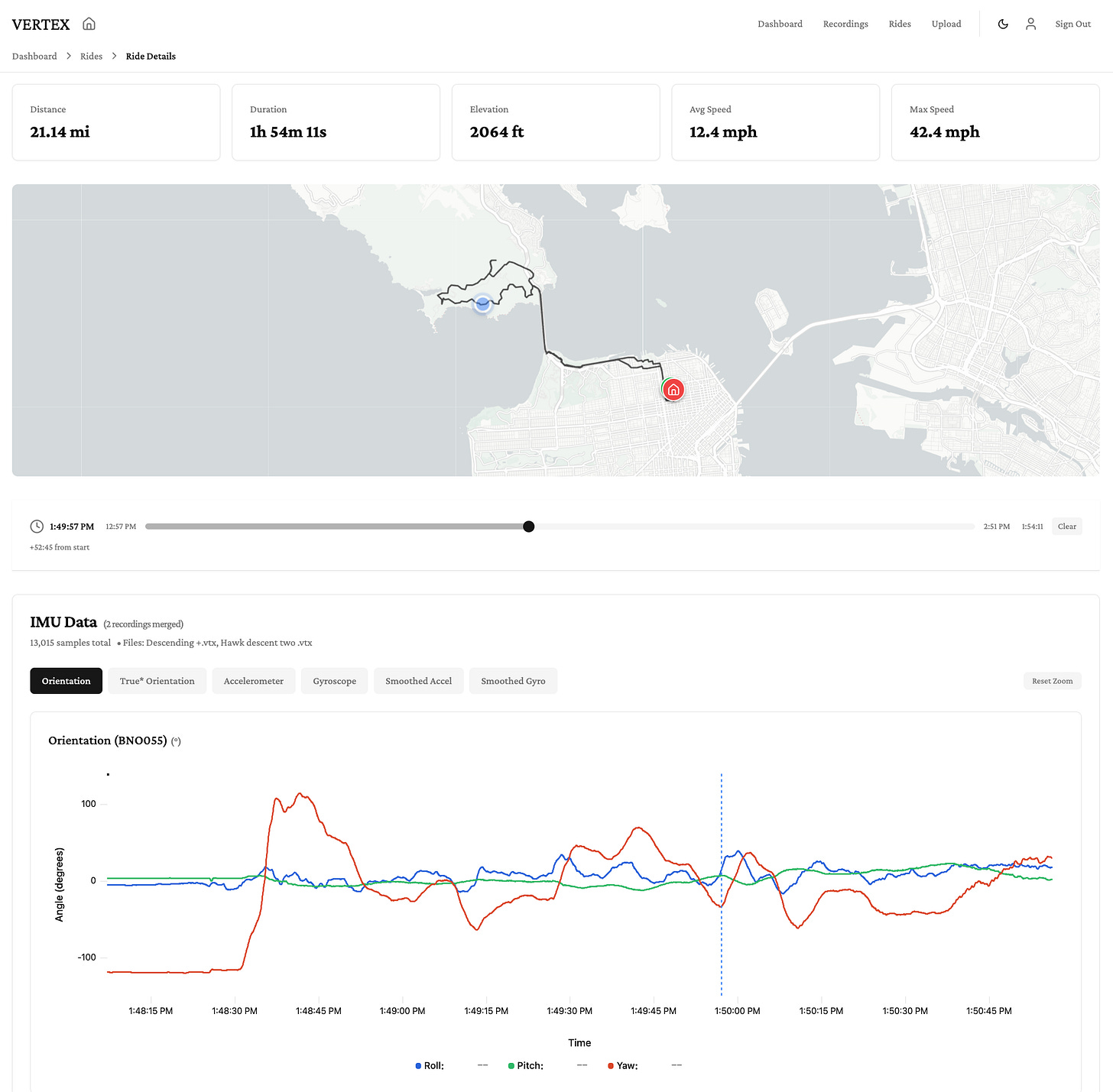

I will continue to improve the hardware, but when I think about the value here, it’s not whether I “leaned 37 degrees at the apex”, it’s if I “leaned more than yesterday”, or if I ‘let off the brakes at the same point as my racer friend’. This takes me back to my discussion of temperature, I’ve realized I don’t need to build a thermometer. I don’t need to know the precise value to know I’m chickening out from taking the fastest line, I just need a better reference frame.

I’ve been trying to grade my own homework, staring at the data in a vacuum and trying to intuit “good riding” from noisy gyroscopes. A 0.4g braking event is just a number; it implies force, but it doesn’t imply intent, and it certainly doesn’t tell me if that force was necessary. I need to zoom out and change the reference frame, instead of chasing the delusion of absolute truth in my Euler angles, instead I plan to chase better.

For example, if I want to improve my descending performance, the most valuable data point isn’t the turn itself, it’s the silence before it. I expect the drift to ruin the absolute cornering data, but the accelerometer is perfect at detecting the “coasting gap”, or the hesitation time between letting off the brakes and initiating the turn. That 0.5 second gap is pure fear, and no amount of sensor drift can hide it.

All of that to say: I’m planning to strap a unit to one of my much more experienced friends, someone significantly faster and smoother than me, and we’re going to ride the same descent, on the same hardware, seconds apart. The only way to turn noise into insight is to overlay my hesitation against their confidence, and see exactly where the gap lies.

Ben

Correlation between animals and Vertex, they can sense fear